What Your Page Looks Like To A Website Crawler

Google doesn’t see a website the same way that a user would see a website. This raises the question of how does google even know what’s on a page and how does it provide accurate search results. The process by which google explores a page is through a process call a Crawl. Crawling is when google’s robots go to a website and look at is HTML code on the different pages of a website. In essence the website crawler just finds all the pages in a URL directory and records all the information. Since google can’t categorize images well enough yet, the website crawler records text found in the HTML code. The things that are more important to the google index are things like HTML headers, Title tags, and website headers.

Indexing

Once google has crawled a website it will index the website. Indexing the site looks at all the content in the site and identifies keywords that are used through out the site. As mentioned before tags and titles carry more weight in the page rank, so using a keyword in these areas allows google to better categorize your site. Once google has indexed your site it is able to serve ups your page as a search results. Matt Cutts from google has a great video that explains how Google crawls the web and indexes the pages that are found. The google index is like brail in the fact that it is a bunch of text information that isn’t as rich as the images or graphics on the page. Knowing this means that SEO tools must be used to make a site that is easy for google to crawl and index.

How To Use SEO Tools To May Your Site Easier To Crawl

Creating and SEO website allows google to crawl and index your sight more frequently which will also increase your page rank. This begs the question of how to make a SEO website structure that allows google to crawl quickly. The base of any SEO website is to create a well organized structure that is easy to follow. To start this a sitemap should be drawn up to identify content hubs. With these content hubs identified the links to those content hub pages should be H1 HTML headers. This tells google that these are of high importance to the site.

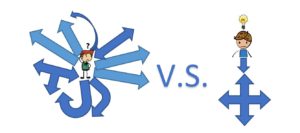

These content hubs should be the main focus of the structure of the website and other pages of the site should point back to these pages. Now don’t go overboard with linking every page in your site to itself, this can create a messy web of links that make it more difficult to crawl the site. Using title tags is another way to tell google what is most important on your page and not create a mess of links. The Title of a page is a main identifier of what that page is about.

Keywords

Not only is having the right structure important, but having the proper keywords can improve how google indexes your sites. SEO tools like Google AdWords can be used to find keywords related to tags and titles that might be on your page. For instance if your website header was HTML google AdWords would recommend other keywords that are related to HTML. This helps in the indexing process, because now google has multiple reference points to understand what they page is referencing. Make sure that these keywords are also used in the HTML headers, tags and titles.

With all this set up this will make the page easy for google to crawl the site, but don’t forget to allow google to index the site. The robot.txt file need’s to be present to tell google which pages it is allowed to crawl and index. Forgetting this step will cause all your hard work to improve your page rank to be for nothing. Torquemag.com has a good list of other small error people make when it comes to helping google index your site more efficiently.